Stories behind the innovation

Near and dear

Keepsakes that get passed through generations of a connected family can unlock hidden characteristics of our colleagues. Cynthia Bryant presents us with some of hers that showcase her mom’s creativity and how she encourages herself to fly.

“Your uniqueness makes you who you are.”

Inspired by her early love for fantasy and gaming, Christina Parker champions diversity and representation in the gaming industry, explaining the accuracy of portrayals and the importance of players seeing themselves in the virtual worlds they love.

Tosh’s journey through time

There are artifacts in our lives that represent how we connect to the world around us. Tosh Hudson shares how journaling, music, and plants, for him, represent a willingness to release, learn, and grow.

Art of cherishing memories

Sometimes our possessions remind us of our favorite places or home. Athena Chang shares the items that take her back to Taiwan, Prague, and New York.

When innovation and passion collide

Jerome Collins discusses the influence of his father’s guidance, his passion for art and music, and his innovative approach to driving positive change and representation in his professional sphere.

“At the end of the day, I think that’s what people want: to be heard.”

Guided by a gift for listening and a commitment to motherhood, Erin Jagelski shares how she navigated post-maternity challenges and pioneered support networks for parents in the workplace by blending her passion and leadership to foster inclusive environments.

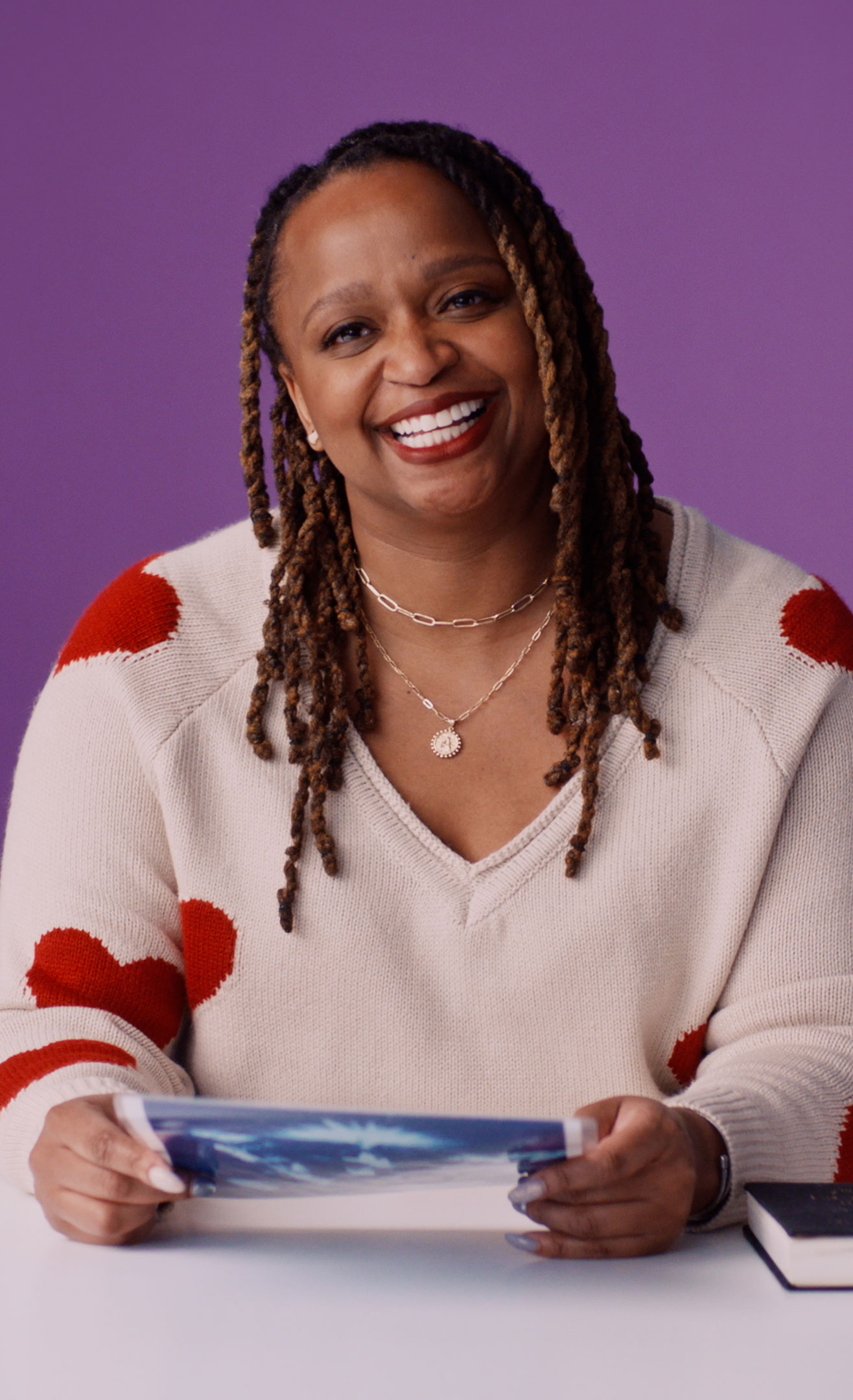

Melissa Curry’s treasures of heritage and achievement

Our possessions showcase the things that really matter to us. Melissa Curry unveils the artifacts that encapsulate her heritage, achievements, and bonds that shape her. Tell us about the artifacts that weave the fabric of your story.

Embrace your identity, embrace life

Kimberly Marreros Chuco discusses embracing one’s unique identity and learning from challenges, emphasizing the importance of adaptability and accepting mistakes as part of growth, inspired by her experiences moving from an Andean mining town.

Featured Artist: Tai Silva

Ashley Witherspoon Innovator’s Inventory and the big plans she’s made

Our personal treasures hold the stories of who we are. Ashley Witherspoon shares the tangible symbols of her values and journey. What mementos narrate your life’s chapters?

This is my sazón

Ivelisse Capellan Heyer is a user experience designer who uses patience and her family to combat her own self-doubt.

Featured Artist: Sol Cotti

Nurturing inner peace

When Ethan Alexander started at Microsoft, he prioritized money over his wellbeing. Twelve years later, the senior customer success account manager and D&I storytelling host knows that the only way to truly take care of others is to first take care of yourself. Discover his story of gratitude and growth.

Featured Artist: Camila Abdanur

Master of messiness

As a mom and a tech leader, Elaine Chang has learned to embrace the chaos and put her “octopus mind” to work in service of innovation, at work and at home.

Featured Artist: Niege Borges

What leaders look like

Shrivaths Iyengar worried that coworkers would be reluctant to follow a leader who had disabilities. Instead, he discovered that his experiences made him a stronger, more empathetic manager.

Featured Artist: Ananya Rao-Middleton

Experiencing both sides

As a child, Ana Sofia Gonzalez crossed between Juárez, Mexico , and El Paso, Texas, every day to go to school. Learning how to live, communicate, and connect in both cultures has made her a better designer, mentor, and innovator.

Featured Artist: Dai Ruiz

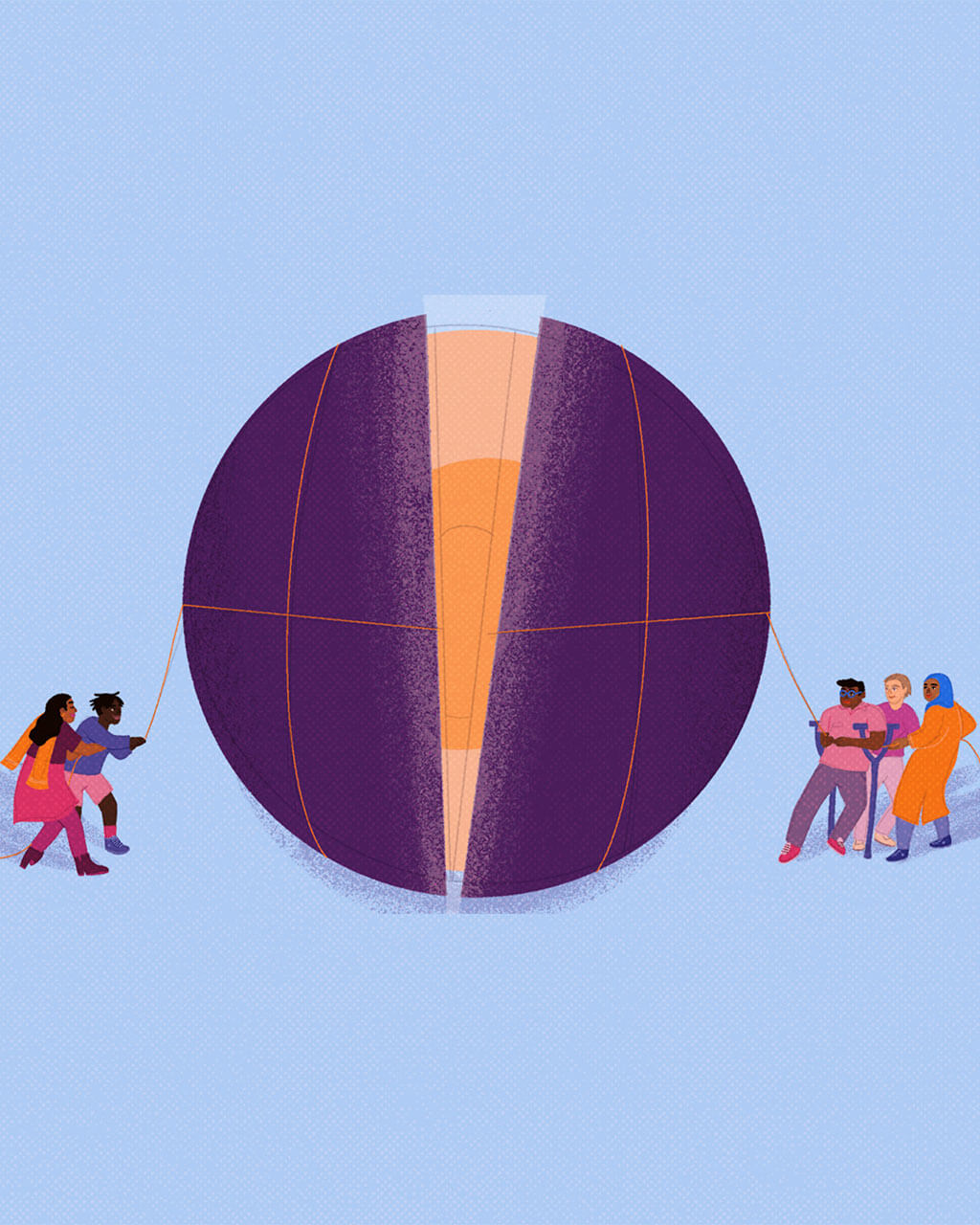

Real progress requires real work

Innovation demands intention.

Innovation thrives on insight.

Innovation requires introspection.

Innovation calls for investment.

“If there’s a family issue … you have

enough grace to be able to take care of it.”

enough grace to be able to take care of it.”